“Leaving out the best bit” project management anti-pattern

When I was at university in the 90s, studying computer science, my final-year bachelor project was a "fractal raytracer".

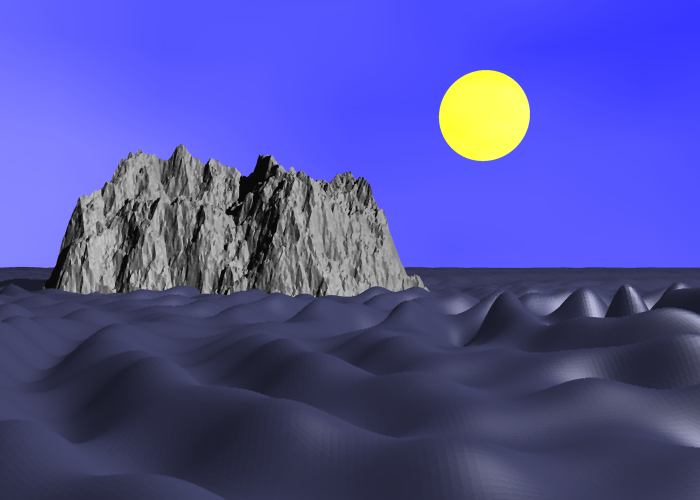

My idea was to write a program which could produce pictures of those wonderful artificial worlds one saw on posters of the time, hung in every university dorm room. Raytracers render highly realistic images, and fractals produce interesting shapes to render.

I set about designing the program, with all the features I wanted. In the object-oriented language of the day (Objective-C) I envisaged a Shape class with various subclasses to represent the various objects the program should be able to render: spheres, fractal landscapes, etc. The fractal landscapes would be further subdivided into those more random mountainous landscapes and, the crowning glory, a 3-dimensional landscape based on those particularly recognizable fractal shapes such as the Mandelbrot.

After the design was completed, I set about the implementation. There was the basic algorithm of the ray-tracer to implement. I wrote a small custom DSL programming language based on TCL to allow the user to programatically specify scenes based on the Shapes available. The rendering of landscapes was quite a challenge especially in terms of performance: landscapes were composed of tiny triangles, and to calculate which of them should be displayed per pixel in reasonable time required a more fancy algorithm than just iterating over all of them per pixel: I envisaged an appropriately fancy algorithm then programmed it successfully.

So far so good, but then disaster struck: I ran out of time. The only hope of delivering something by the deadline was reducing functionality somehow. But what could I leave out? I couldn't create landscapes in memory and have no way to render them, such a program would produce no output and thus look like it didn't do anything at all, so I needed the raytracing algorithm. I couldn't just program the part to generate Mandelbrot landscapes but leave out the fancy algorithm to convert their triangles to pixels, so I needed the fancy algorithm.

So in the end I left out the small piece of code to generate the Mandelbrot landscapes. But i had all the supporting software which would be required for the result of that small piece of code to be rendered.

So what I had I achieved?

- Created an architecture and algorithms to do what I wanted (= render Mandelbrot landscapes)

- Spent 95% of the time I would have had to spend to achieve that what I wanted (= render Mandelbrot landscapes)

- Produced a program which did not do what I wanted (= render Mandelbrot landscapes)

I think this is often the way with software:

- The "crowning glory" (which is the reason you wrote the program in the first place) is often a tiny piece of work, sitting on a great body of other supporting work

- You can't have the "crowning glory" without the other support work. (However, you can have the other support work without the "crowning glory".)

- Therefore, if you have to cut something, you have no option but to cut the "crowning glory".

- It's pretty often that software projects go over-budget, forcing you to cut something.

Alas I don't know what the solution to this problem is.

It's a bit like spending two hours preparing a curry but then saving 20 seconds by not adding salt, so the result doesn't actually taste nice.

I think one should bear in mind that if one makes cuts to software, due to the fact one can only realistically cut the "crowning glory" features, one is often cutting 5% of the effort but cutting the main purpose of the software, which is a poor trade-off.

Cutting pointless features

A seeming counter-example to the above, from my professional career, is when a product manager, with little understanding of the costs required to produce software, and little self-restraint, insists on a dizzying array of features that the user "might need" and without which they believe they "cannot ship".

Cutting such features definitely helps the product, making it easier for the users to find the features they do actually need, without having to wade through said dizzying array of features they do not need. (In addition to allowing the users to continue to use the product at all, due to the company producing it not yet being bankrupt.)

Nevertheless, cutting a feature such as "users see feeds of their activities and their friends' activities" allows for a great number of supporting elements to be cut as well, for example modelling which activities a user has done or which friends a user has.

So I'd argue cutting features, before implementation has begun (leading to a cleaner user-interface and longer-lived company), is good, vs. simply running out of time and leaving out the crowning-glory feature (for which all the supporting work has been done) is bad.